Soft Wetware

Seeing the forest through the tech trees.

2013-07-29

Insofar, so good

The word "insofar" needs a comparative form. If it has one, the word will have a purpose in life, insofar as it's useful when you can compare the extent to which two clauses hold -- which is insofarther than it's useful when you can only equate them. And if it has a superlative form as well, it'll be useful insofarthest of all.

2013-06-10

Exaptationist vs adaptationist Singularity, and engineerability

In terms of total biological/social/technological complexity, human society may still be closer to the first single-celled lifeforms that colonized the Earth 4 billion years ago than we are to a technological singularity. But in terms of the logarithm of complexity, we're nearly there -- we have most of the orders of magnitude of progress we'll need. And because positive feedback loops continue to provide exponential or faster growth, that's what matters.

If we'd come that far without a targeted effort, how much faster could we progress toward the Singularity if we organized for that explicit purpose? Or if we weren't in a hurry, could we reduce the risk of an unfriendly Singularity, i.e. get some "Terminator insurance"? The standard assumption among Singularitarians seems to be that switching to "engineered evolution" is desirable if not essential, now that we understand the nature and dynamics of the positive-feedback loops involved.

But the first attempt to engineer humanity's evolution in a post-Darwinian framework was eugenics, which was clearly premature given Victorian-era science, and has clearly done more harm than good. Attempts to engineer technology's evolution (MIRI, Lifeboat Foundation, etc.) haven't caused a Holocaust yet, but they've led to a scientist receiving two death threats. At best, they've been a distraction, and they haven't produced any innovations that could feed the feedback loops (such as any algorithms a programmer could actually implement, compile, run, and get useful output from). And if it's possible to engineer the Singularity, then why did Ray Kurzweil get out of a successful engineering career (while allegedly in great health) when he discovered the concept?

The Singularitarian's burden

The other day, it occurred to me how impressive it was that so much progress had been made without anyone or anything having to concern themselves with what their own or any other species was evolving into, or how quickly.If we'd come that far without a targeted effort, how much faster could we progress toward the Singularity if we organized for that explicit purpose? Or if we weren't in a hurry, could we reduce the risk of an unfriendly Singularity, i.e. get some "Terminator insurance"? The standard assumption among Singularitarians seems to be that switching to "engineered evolution" is desirable if not essential, now that we understand the nature and dynamics of the positive-feedback loops involved.

But the first attempt to engineer humanity's evolution in a post-Darwinian framework was eugenics, which was clearly premature given Victorian-era science, and has clearly done more harm than good. Attempts to engineer technology's evolution (MIRI, Lifeboat Foundation, etc.) haven't caused a Holocaust yet, but they've led to a scientist receiving two death threats. At best, they've been a distraction, and they haven't produced any innovations that could feed the feedback loops (such as any algorithms a programmer could actually implement, compile, run, and get useful output from). And if it's possible to engineer the Singularity, then why did Ray Kurzweil get out of a successful engineering career (while allegedly in great health) when he discovered the concept?

2013-01-04

Anxiety, workaholism and transhumanism

Max More, CEO of Alcor and noted transhumanist writer, sent me an e-mail last month that left me concerned about a possible epidemic of anxiety and workaholism within the transhumanist community. He wrote:

If you are actively working to bringing about technological advance, then clearly you are not a "passive singularitarian".

For what my opinion is worth (and I've extensively studied practical psychology, especially cognitive therapy), I strongly agree that "should" thoughts are emotionally and psychologically dangerous. [...] I'm concerned that Singularity-thinking can be a strong source of "should" thinking.I share Max's concerns. Many transhumanists have probably been harmed by "should" thinking, as I was; and those of us on the autism spectrum are likely to suffer from anxiety without knowing it (more on that in my next post). I hope that by making my own battle with anxiety public, I can open a much-needed dialogue.

2012-12-06

The opposite of creationism: an antimetabole

Singularitarianism is the opposite of creationism.

Creationists say God created humans in the shockingly recent past. Singularitarians say humans will create God in the shockingly near future.

When creationists look at evolution, they see the engineering of the universe. When Singularitarians look at engineering, we see the evolution of the universe.

Creationists say you should thank God for giving you computers. Singularitarians say you'll thank computers for giving you God.

Creationists say God created humans in the shockingly recent past. Singularitarians say humans will create God in the shockingly near future.

When creationists look at evolution, they see the engineering of the universe. When Singularitarians look at engineering, we see the evolution of the universe.

Creationists say you should thank God for giving you computers. Singularitarians say you'll thank computers for giving you God.

2012-09-12

Left-of-center, but not left behind in transhumanism

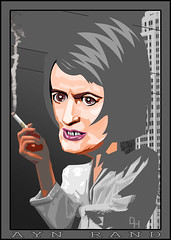

|

| The face of the future?! (Image credit: DonkeyHotey) |

What did Ayn Rand have to do with transhumanism? If transhumanism wasn't exclusively libertarian, why promote an author better known for libertarianism than anything? Why risk alienating the Left unnecessarily?

I'd recently read, in Cory Doctorow's review of a book, that transhumanism had originated among libertarians, that it had faced plenty of criticism from liberals, and that the book's effort to reconcile it with democratic socialism was long overdue. Was this pessimistic picture accurate? Would being a democratic socialist alienate me from transhumanism and vice-versa?

2012-08-22

Mind uploading: the write answer

| A 1st generation Apple iPad showing iBooks, with the book Alice's Adventures in Wonderland. (Photo credit: Wikipedia) |

But if I ask whether or not the book you're reading is Alice in Wonderland, I don't care what kind of ink or paper it's printed on, or whether or not it's printed at all. As far as the reader is concerned, a book is text, not the configuration of matter used to store that text. Nobody questions whether or not an e-book can be Alice, just because Alice was originally a printed book.

2012-08-08

Dehumanize me!

| The usual chart explaining the Uncanny Valley concept. (Photo credit: Wikipedia) |

(While this hypothesis hasn't been confirmed to my knowledge, it's the only plausible explanation I can find for why Aspies are blind to our own and each other's publicly-perceived weirdness. The Uncanny Valley seems to be a fairly new, but very active, field of research. I'm treating the Uncanny Valley effect as a working hypothesis, while I wait for it to pass the test of science.)

Getting out of it on the human side won't be an option, at least until a way is found to reliably (and therefore without conscious effort) suppress the noticeable effects of Asperger Syndrome. It has to be done without throwing out the baby with the bathwater (apparently AS may be contributing to my talent in computer science). I figure if that's possible at all, it will require AI and/or neuroscience to reach near-Singularity levels.

Plus, staying on the right side of the Valley may prove overly restrictive if I need an extra pair of arms, or if the new chip I want to put in my brain needs a heat sink. A recent nasty incident, involving a camera eye, suggests that being an early-adopter visible cyborg (as I plan to) probably won't help in general.

But there's another option as well: climb out the other side of the Uncanny Valley, and become a cyborg in some non-humanoid form. Instead of rejecting me as an overly abnormal human, people can accept me as something else, and my human side will become a pleasant surprise rather than a let-down. This will probably be technologically feasible by 2030, and I'm already far from alone in wanting a change of body plan. I'd considered the idea before, but only in abstract terms. I'd assumed that looking more or less human for as long as possible would help with social acceptance.

It's a surprisingly Troperiffic conclusion. MySpeciesDothProtestTooMuch that I'm a HumanoidAbomination, because neurotypical people are hard-wired to be ObliviouslyEvil AbsoluteXenophobes. Until yesterday, my own WeirdnessCensor kept me so WrongGenreSavvy that I thought I was in AFormYouAreComfortableWith, and my plans to keep it that way would have been subverted. WhatMeasureIsAHumanoid? But if I become a OneWingedAngel, people will realize how much of me has been HumanAllAlong, and that's what any ProHumanTranshuman would want.

Time to start researching designs for non-human cyborgs, and deciding what I want to be when humanity grows up. Unlike the typical spiritualistic Otherkin, I'm not substrate-chauvinistic enough to believe I have a "true" form. But because I'm not locked into one, I have a wide-open catalog to pick from. Affective psychology class is tough; let's go shopping!

Subscribe to:

Posts (Atom)